In-memory caching is fast, but the moment you deploy a second instance behind a load balancer, each pod has its own cache and users get inconsistent data. Client A hits Pod 1 and gets the cached product list. Client B hits Pod 2 and triggers a full database query for the same data. Scale to five pods and you have five independent caches, each with its own staleness window. That is where Redis comes in.

In this guide, I will walk you through everything about distributed caching in ASP.NET Core with Redis and .NET 10. I will cover the IDistributedCache interface, custom extension methods for type-safe caching, Docker setup for Redis, BenchmarkDotNet numbers comparing Redis vs in-memory vs database, and a HybridCache migration path for new projects. I will also share my opinions on when Redis is the right call and when you are overengineering.

Let’s get into it.

In-Memory Caching in ASP.NET Core .NET 10

This article builds on the in-memory caching concepts covered in the prerequisite guide. If you are new to caching in ASP.NET Core, start there first.

What Is Distributed Caching in ASP.NET Core?

Distributed caching in ASP.NET Core stores cached data in an external server (like Redis) that is shared across all application instances, ensuring consistent data regardless of which pod serves the request. Unlike in-memory caching where data lives in the application process, a distributed cache exists outside your application entirely. It does not need to be on the same server, and it survives application restarts and deployments.

This is the key difference: IMemoryCache is process-local. IDistributedCache is network-accessible. When you have three pods behind a load balancer, all three read from and write to the same Redis instance. A cache set on Pod 1 is immediately available to Pod 2 and Pod 3. No inconsistency, no duplicated database queries.

The trade-off is latency. Every cache operation involves a network round-trip to the Redis server plus serialization and deserialization of data. In-memory cache hits are nanosecond-scale. Redis cache hits are millisecond-scale. But milliseconds are still 100-500x faster than a database query, which is the whole point.

For the official reference, see Microsoft’s distributed caching documentation.

When to Use Distributed Caching - Decision Matrix

This is the question I get asked most after someone reads my in-memory caching article: “When should I switch to Redis?” Here is my decision matrix:

| Criteria | In-Memory (IMemoryCache) | Distributed (Redis) | HybridCache |

|---|---|---|---|

| Data scope | Single server process | Shared across all instances | L1 per-process + L2 shared |

| Network latency | None (same process) | 1-5ms per call | L1: none, L2: 1-5ms |

| Survives restart | No | Yes | L2: yes |

| Stampede protection | No (manual SemaphoreSlim) | No (manual) | Yes (built-in) |

| Serialization | None (stores references) | Required (JSON/binary) | L1: none, L2: required |

| Data persistence | No | Yes (RDB/AOF snapshots) | L2: yes |

| Replication | Not possible | Built-in (Redis Sentinel/Cluster) | Depends on L2 backend |

| Pub/Sub support | No | Yes (built-in) | No |

| Tag-based invalidation | No | No (manual) | Yes (RemoveByTagAsync) |

| Best for | Single-instance APIs, lookup data | Multi-instance, shared state, session data | Any topology, new .NET 10 projects |

| Setup complexity | 1 line | Redis infrastructure needed | 2 lines + optional L2 config |

What about Memcached? Redis and Memcached are both popular distributed caching options. Memcached is simpler and slightly faster for pure key-value caching, but Redis offers data structures (lists, sets, hashes), persistence, replication, and pub/sub. For .NET developers, Redis is the clear winner because StackExchange.Redis is a mature, battle-tested client library with excellent async support. I have never seen a .NET project choose Memcached over Redis in production.

My take: If I am running a single instance and the data fits in RAM, I stick with IMemoryCache. The moment I deploy behind a load balancer, need cache persistence across deployments, or share state between services, I move to Redis. For new .NET 10 projects where I want both the speed of L1 in-memory and the durability of L2 Redis without writing that plumbing myself, I use HybridCache. The mistake I see most developers make is running Redis for a single-instance API that would be perfectly served by AddMemoryCache().

Docker Guide for .NET Developers

If you are new to Docker, start here. This guide covers Docker fundamentals, containers, images, and docker-compose for .NET developers.

Pros and Cons of Distributed Caching

Pros

- Data consistency across instances - every pod reads from the same cache. When Pod 1 caches a product list, Pods 2 through 5 serve the same data without hitting the database.

- Shared resource - a single Redis instance serves multiple applications and services. I have used one Redis instance to back caching for three separate microservices, which cut infrastructure costs compared to each service running its own cache layer.

- Survives restarts - the cache persists across application deployments, pod restarts, and even server reboots (with Redis persistence enabled). No cold-start penalty after a deployment.

- No local memory pressure - cached data lives on the Redis server, not in your application’s heap. Your API pods stay lean and predictable in memory usage.

- Horizontal scalability - Redis can handle millions of operations per second. When your application scales to 50 pods, Redis does not break a sweat. You can also use Redis Cluster for sharding across multiple nodes.

- Built-in fault tolerance - Redis Sentinel provides automatic failover. Redis Cluster provides data sharding and replication. In production, I run at least a primary and a replica.

- Advanced features beyond caching - Redis supports pub/sub messaging, Lua scripting, transactions, sorted sets, and streams. Once Redis is in your infrastructure, you can leverage it for rate limiting, session storage, and real-time leaderboards.

Cons

- Network latency - every cache operation is a network round-trip. I measured 1-3ms per Redis call on localhost and 3-8ms across availability zones. That is fast, but orders of magnitude slower than nanosecond in-memory hits.

- Serialization overhead - unlike

IMemoryCachewhich stores object references directly, Redis requires serializing data to bytes and deserializing on read. This adds CPU cost and memory allocations on every operation. - Infrastructure complexity - you need a Redis server running, monitored, and maintained. In production, that means Redis Sentinel or Cluster, monitoring dashboards, backup strategies, and connection pool tuning.

- Cost - managed Redis services (Azure Cache for Redis, Amazon ElastiCache) can get expensive at higher tiers. A 6GB Azure Cache for Redis Premium instance runs over $300/month.

- Network dependency - if the Redis server goes down or the network between your app and Redis degrades, cache operations fail. You need retry policies and graceful fallback to the database.

- Security surface - Redis traffic crosses the network. You need TLS encryption, authentication (Redis 6+ ACLs), and proper network isolation. In my production setups, I always run Redis behind a private VNet with TLS enforced.

System design is a game of trade-offs. There is no single best approach. Everything depends on the purpose and scale of your application.

IDistributedCache Interface

The IDistributedCache interface in .NET provides the methods to interact with any distributed cache backend. This is the abstraction you code against, and the concrete implementation (Redis, SQL Server, NCache) is swapped via dependency injection. Here are the four core methods (documented in the IDistributedCache API reference):

- GetAsync - retrieves the cached value as a

byte[]based on the provided key. Returnsnullon a cache miss. - SetAsync - accepts a key and a

byte[]value, stores it in the cache with optionalDistributedCacheEntryOptionsfor expiration. - RefreshAsync - resets the sliding expiration timer for a cached item without retrieving its value. Useful for keeping active session data alive.

- RemoveAsync - deletes the cached entry for the specified key. I call this on every write operation to prevent stale data.

One method that is notably missing from this interface is GetOrCreateAsync. IMemoryCache has it, but IDistributedCache does not. This means you have to write the “check cache, miss, fetch from DB, populate cache” logic yourself every time. Later in this article, I will build extension methods that add GetOrSetAsync and TryGetValue<T> to make this seamless.

What Is Redis?

Redis is an open-source, in-memory data store that serves as a database, cache, message broker, and streaming engine. Written in C, it supports data structures like strings, hashes, lists, sets, sorted sets, and streams. Redis is known for sub-millisecond response times and is used by companies like GitHub, Stack Overflow, Twitter, and Shopify.

There are two flavors worth knowing about:

- Redis OSS - the open-source core. This is what you get when you

docker pull redis. It covers everything you need for distributed caching: key-value storage, expiration, persistence, replication, and pub/sub. - Redis Stack - extends Redis OSS with additional modules: RediSearch (full-text search), RedisJSON (native JSON support), RedisTimeSeries, and RedisBloom (probabilistic data structures). You do not need Redis Stack for caching, but it is worth knowing it exists if you need search or JSON capabilities later.

A quick note on Garnet: Microsoft recently open-sourced Garnet, a Redis-compatible cache server written in C#. It claims better throughput and lower latency than Redis for certain workloads. It is still early days, but if you want a Redis-compatible server that runs natively on .NET, Garnet is worth keeping an eye on. For production distributed caching today, Redis remains the battle-tested default.

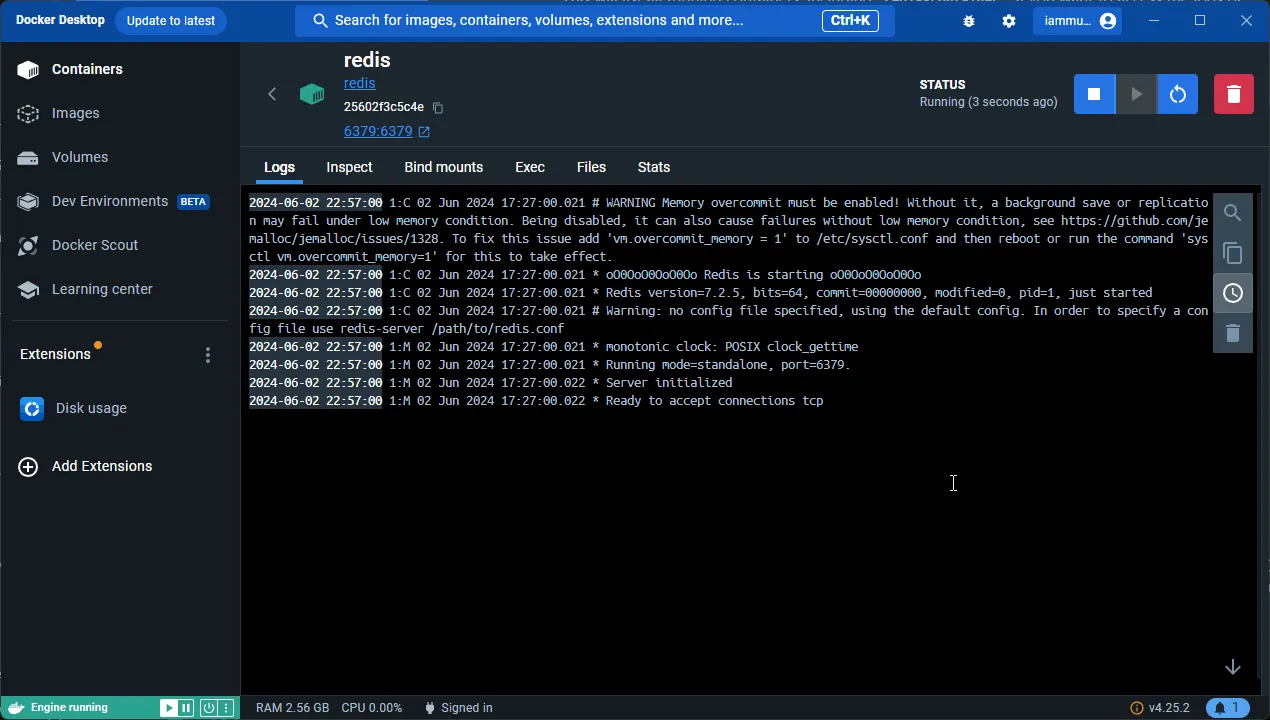

Running Redis in Docker

For local development, the easiest way to run Redis is in a Docker container. Make sure you have Docker Desktop installed on your machine.

Pull the Official Redis Image

Open your terminal and pull the latest Redis image:

docker pull redisRun the Redis Container

Start a Redis container with port mapping and a password:

docker run --name redis -d -p 6379:6379 redis redis-server --requirepass yourpassword--name redis- assigns a name to the container for easy reference-d- runs the container in detached (background) mode-p 6379:6379- maps port 6379 on your host to port 6379 in the container--requirepass yourpassword- sets a password for Redis authentication

You can verify the container is running via Docker Desktop or by running docker ps.

Docker Compose (Recommended)

For a more maintainable setup, I prefer using docker-compose. Create a docker-compose.yml file:

services: redis: image: redis:latest container_name: redis ports: - "6379:6379" command: redis-server --requirepass yourpassword --appendonly yes volumes: - redis-data:/data

volumes: redis-data:The --appendonly yes flag enables AOF (Append Only File) persistence, so your cached data survives container restarts. The volume mount ensures data is persisted to disk. Start it with:

docker-compose up -dAccessing Redis via CLI

To interact with the Redis instance, enter the container’s shell and launch the Redis CLI:

docker exec -it redis shThen authenticate and start using Redis:

redis-cli -a yourpasswordBasic Redis Commands

Here are the essential Redis CLI commands you should know:

Setting a Cache Entry

SET name "Mukesh"OKGetting a Cache Entry by Key

GET name"Mukesh"Deleting a Cache Entry

DEL name(integer) 1GET name(nil)Setting a Key with Expiration (in Seconds)

SETEX name 10 "Mukesh"OKCheck time remaining before expiration:

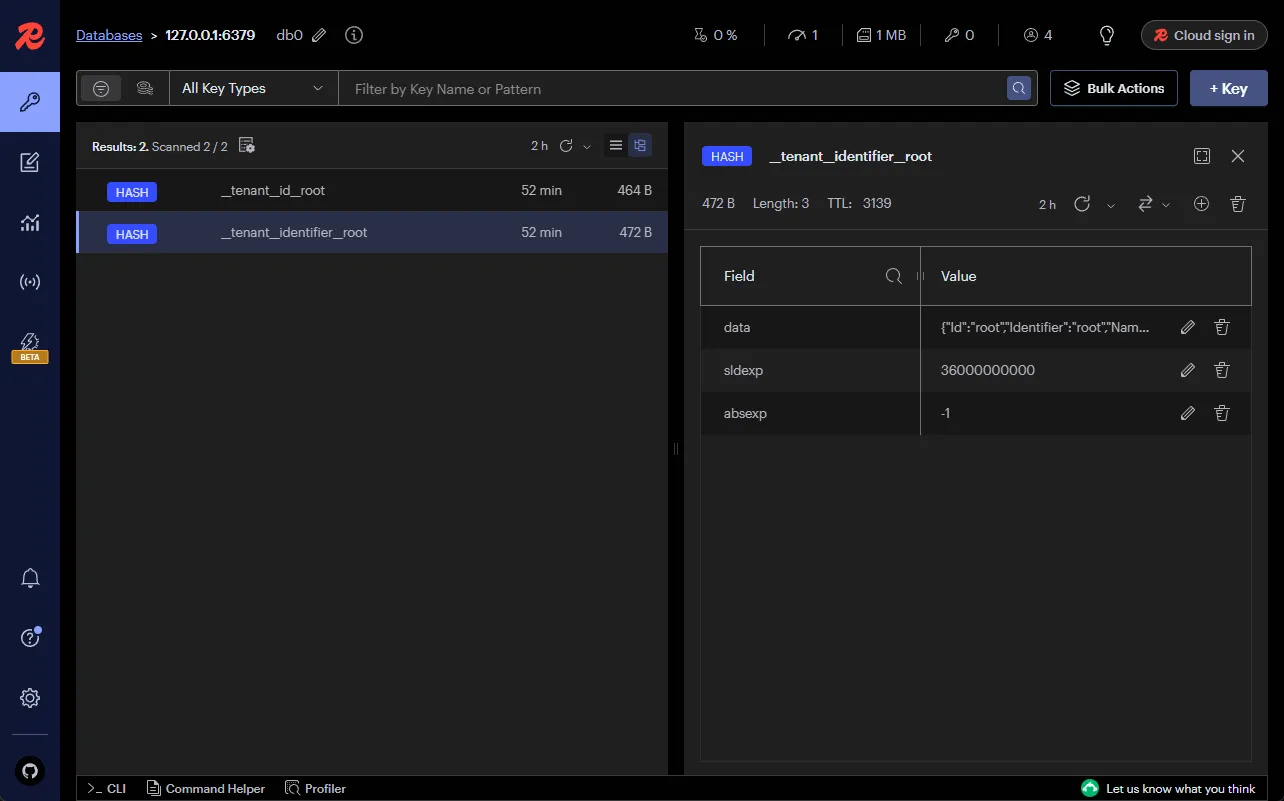

TTL name(integer) 5Redis Insight (Recommended)

Beyond the CLI, I recommend installing Redis Insight for monitoring and managing your Redis instances. It is a free GUI tool that gives you a real-time view of your Redis data, memory usage, commands per second, and client connections. You can browse keys, execute commands, and troubleshoot issues without memorizing CLI syntax.

I use Redis Insight in every project that involves Redis. It makes debugging cache behavior significantly easier compared to the raw CLI.

Implementing Redis Caching in .NET 10

Let me walk through implementing distributed caching with Redis in an ASP.NET Core .NET 10 Web API. I will use the same Product CRUD API from my in-memory caching guide, connected to PostgreSQL via EF Core 10 with 1,000+ seeded product records.

First, install the Redis caching NuGet package:

dotnet add package Microsoft.Extensions.Caching.StackExchangeRedis --version 10.0.0This package provides the Redis implementation of IDistributedCache using the StackExchange.Redis client library under the hood.

Configuring Redis in Program.cs

Add the Redis cache service to your Program.cs:

builder.Services.AddStackExchangeRedisCache(options =>{ options.Configuration = builder.Configuration.GetConnectionString("Redis"); options.InstanceName = "codewithmukesh:";});The InstanceName acts as a prefix for all cache keys. If you set it to "codewithmukesh:", a key like "products" becomes "codewithmukesh:products" in Redis. This is useful when multiple applications share the same Redis instance.

Add the connection string to appsettings.json:

{ "ConnectionStrings": { "Database": "Host=localhost;Database=distributedcaching;Username=postgres;Password=yourpassword;Include Error Detail=true", "Redis": "localhost:6379,password=yourpassword,abortConnect=false" }}Replace these credentials with your own. In production, use environment variables or user secrets instead of hardcoding connection strings.

Notice abortConnect=false in the Redis connection string. This tells StackExchange.Redis to not throw an exception if Redis is unavailable at startup. Instead, it will retry the connection in the background. I always set this to false in production to prevent application startup failures when Redis is temporarily unreachable.

Options Pattern in ASP.NET Core

If you want to bind cache configuration values from appsettings.json instead of hardcoding them, read about the Options Pattern.

Extension Methods for IDistributedCache

The raw IDistributedCache interface works with byte[] arrays, which means you need to serialize and deserialize every object manually. I wrote extension methods to handle this transparently:

public static class DistributedCacheExtensions{ private static readonly JsonSerializerOptions SerializerOptions = new() { PropertyNamingPolicy = null, WriteIndented = false, AllowTrailingCommas = true, DefaultIgnoreCondition = JsonIgnoreCondition.WhenWritingNull };

public static Task SetAsync<T>( this IDistributedCache cache, string key, T value, CancellationToken cancellationToken = default) { return SetAsync(cache, key, value, new DistributedCacheEntryOptions() .SetSlidingExpiration(TimeSpan.FromMinutes(30)) .SetAbsoluteExpiration(TimeSpan.FromHours(1)), cancellationToken); }

public static Task SetAsync<T>( this IDistributedCache cache, string key, T value, DistributedCacheEntryOptions options, CancellationToken cancellationToken = default) { var bytes = Encoding.UTF8.GetBytes(JsonSerializer.Serialize(value, SerializerOptions)); return cache.SetAsync(key, bytes, options, cancellationToken); }

public static bool TryGetValue<T>( this IDistributedCache cache, string key, out T? value) { var val = cache.Get(key); value = default; if (val is null) return false; value = JsonSerializer.Deserialize<T>(val, SerializerOptions); return true; }

public static async Task<T?> GetOrSetAsync<T>( this IDistributedCache cache, string key, Func<Task<T>> factory, DistributedCacheEntryOptions? options = null, CancellationToken cancellationToken = default) { if (cache.TryGetValue(key, out T? value) && value is not null) { return value; }

value = await factory();

if (value is not null) { options ??= new DistributedCacheEntryOptions() .SetSlidingExpiration(TimeSpan.FromMinutes(30)) .SetAbsoluteExpiration(TimeSpan.FromHours(1));

await cache.SetAsync(key, value, options, cancellationToken); }

return value; }}Here is what each method does:

SetAsync<T> - serializes the value to JSON, converts it to a UTF-8 byte array, and stores it in Redis. The default overload sets a 30-minute sliding expiration with a 1-hour absolute ceiling. I always set both to prevent entries from living forever.

TryGetValue<T> - retrieves the raw bytes from Redis, deserializes them back to type T, and returns true if the key was found. This mirrors the IMemoryCache.TryGetValue pattern that .NET developers are already familiar with.

GetOrSetAsync<T> - the workhorse method. It checks the cache first. On a hit, it returns the cached value. On a miss, it executes the factory delegate (your database query), caches the result, and returns it. This is the missing GetOrCreateAsync that IDistributedCache should have had from the start.

Notice that every method accepts a CancellationToken. This is important for distributed caching because network calls to Redis can hang if the server is overloaded. Always pass your request’s cancellation token through.

Product Service

Here is the ProductService class that uses these extension methods. IDistributedCache, AppDbContext, and ILogger<ProductService> are injected via the primary constructor:

public class ProductService( AppDbContext context, IDistributedCache cache, ILogger<ProductService> logger) : IProductService{ private const string AllProductsCacheKey = "products";

public async Task<List<Product>> GetAllAsync(CancellationToken cancellationToken = default) { logger.LogInformation("Fetching data for key: {CacheKey}.", AllProductsCacheKey);

var cacheOptions = new DistributedCacheEntryOptions() .SetAbsoluteExpiration(TimeSpan.FromMinutes(20)) .SetSlidingExpiration(TimeSpan.FromMinutes(2));

var products = await cache.GetOrSetAsync( AllProductsCacheKey, async () => { logger.LogInformation("Cache miss for key: {CacheKey}. Fetching from database.", AllProductsCacheKey); return await context.Products.AsNoTracking().ToListAsync(cancellationToken); }, cacheOptions, cancellationToken);

return products ?? []; }

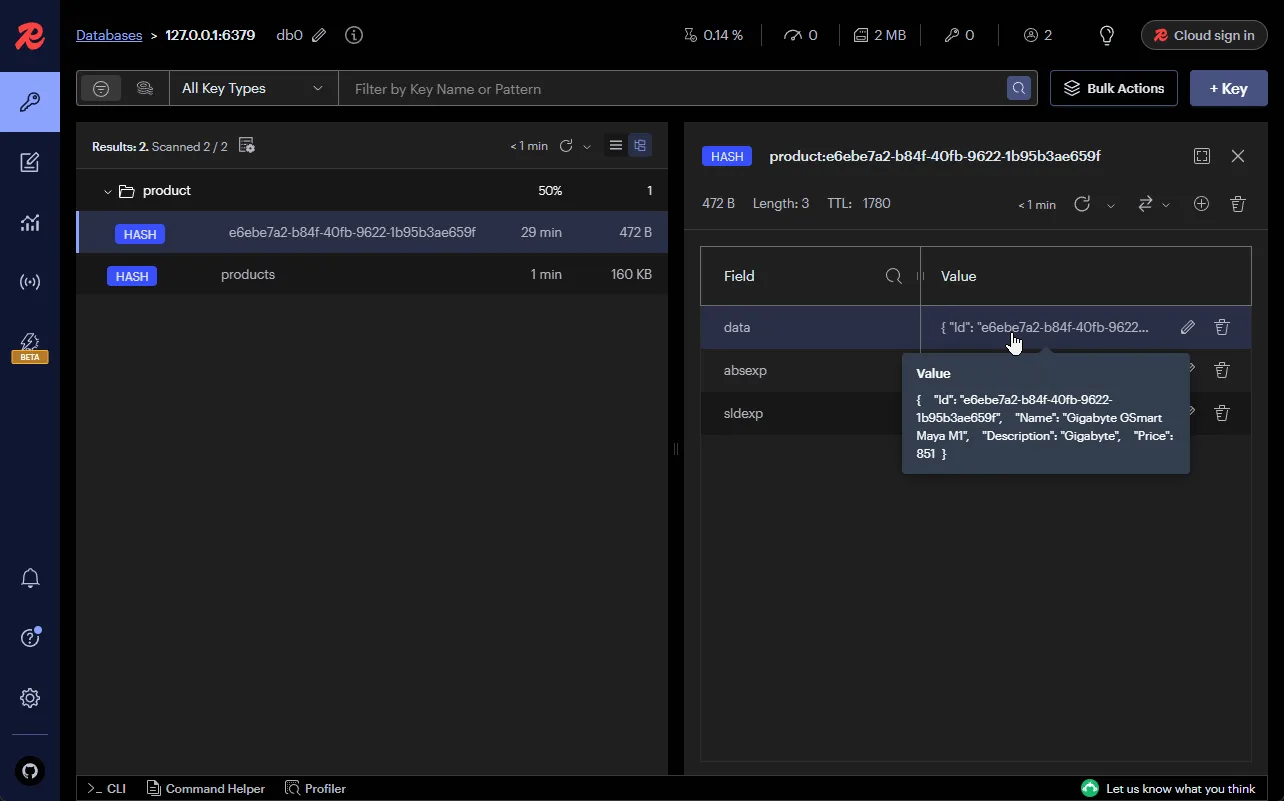

public async Task<Product?> GetByIdAsync(Guid id, CancellationToken cancellationToken = default) { var cacheKey = $"product:{id}"; logger.LogInformation("Fetching data for key: {CacheKey}.", cacheKey);

var product = await cache.GetOrSetAsync( cacheKey, async () => { logger.LogInformation("Cache miss for key: {CacheKey}. Fetching from database.", cacheKey); return await context.Products.AsNoTracking() .FirstOrDefaultAsync(p => p.Id == id, cancellationToken); }, cancellationToken: cancellationToken);

return product; }

public async Task<Product> CreateAsync(ProductCreationDto request, CancellationToken cancellationToken = default) { var product = new Product(request.Name, request.Description, request.Price); await context.Products.AddAsync(product, cancellationToken); await context.SaveChangesAsync(cancellationToken);

logger.LogInformation("Invalidating cache for key: {CacheKey}.", AllProductsCacheKey); await cache.RemoveAsync(AllProductsCacheKey, cancellationToken);

return product; }}A few things to notice about this implementation:

- The

GetAllAsyncmethod sets a shorter absolute expiration (20 minutes) because the product list changes more frequently than individual products. - The

CreateAsyncmethod invalidates the"products"cache key after inserting a new product. This ensures the nextGetAllAsynccall fetches fresh data from the database. UnlikeIMemoryCache, you cannot mutate a cached reference directly because Redis stores serialized bytes, not object references. - Every database query uses

AsNoTracking()since the data is going straight into the cache and not being modified through EF Core.

Structured Logging with Serilog in ASP.NET Core

The structured log messages in the service use Serilog's message template syntax. Learn how to set up Serilog for production-grade logging.

Minimal API Endpoints

Here is how the service is wired into minimal API endpoints:

var products = app.MapGroup("/products").WithTags("Products");

products.MapGet("/", async (IProductService service, CancellationToken cancellationToken) =>{ var result = await service.GetAllAsync(cancellationToken); return TypedResults.Ok(result);});

products.MapGet("/{id:guid}", async (Guid id, IProductService service, CancellationToken cancellationToken) =>{ var product = await service.GetByIdAsync(id, cancellationToken); return product is not null ? TypedResults.Ok(product) : Results.NotFound();});

products.MapPost("/", async (ProductCreationDto request, IProductService service, CancellationToken cancellationToken) =>{ var product = await service.CreateAsync(request, cancellationToken); return TypedResults.Created($"/products/{product.Id}", product);});Register the service as scoped in Program.cs:

builder.Services.AddScoped<IProductService, ProductService>();Using TypedResults instead of Results gives you strongly-typed responses and better OpenAPI documentation in Scalar.

Dependency Injection in ASP.NET Core Explained

If you are new to DI, this guide explains service lifetimes, registration patterns, and how the DI container resolves dependencies.

Once you run the API and hit the /products endpoint, the first request will be a cache miss and fetch from PostgreSQL. The second request will serve from Redis. You can verify the cached data in Redis Insight:

Interview Questions PDF

100 real interview questions across 9 categories, junior to senior

BenchmarkDotNet: Redis vs In-Memory vs Database

The old version of this article showed Postman response times, but those mix HTTP overhead, JSON serialization, and actual cache performance. I set up a proper BenchmarkDotNet project (included in the companion repo) to measure the raw cost of each caching layer.

The benchmark compares three paths for the same data: direct database fetch via EF Core, in-memory cache hit via IMemoryCache, and Redis cache hit via IDistributedCache. Both single-item and 1,000-item list scenarios are tested:

[MemoryDiagnoser][SimpleJob(warmupCount: 3, iterationCount: 10)]public class RedisCacheBenchmarks{ private AppDbContext _dbContext = null!; private IMemoryCache _memoryCache = null!; private IDistributedCache _redisCache = null!; private Guid _productId;

[GlobalSetup] public void Setup() { // Configure real PostgreSQL + Redis connections and pre-warm both caches // Full setup in the repo's RedisCacheBenchmarks.cs }

[Benchmark(Baseline = true)] public async Task<Product?> SingleProduct_DatabaseFetch() { return await _dbContext.Products .AsNoTracking() .FirstOrDefaultAsync(p => p.Id == _productId); }

[Benchmark] public Product? SingleProduct_MemoryCacheHit() { _memoryCache.TryGetValue($"product:{_productId}", out Product? product); return product; }

[Benchmark] public async Task<Product?> SingleProduct_RedisCacheHit() { var bytes = await _redisCache.GetAsync($"product:{_productId}"); return bytes is not null ? JsonSerializer.Deserialize<Product>(bytes) : null; }

[Benchmark] public async Task<List<Product>> AllProducts_DatabaseFetch() { return await _dbContext.Products .AsNoTracking() .Take(1000) .ToListAsync(); }

[Benchmark] public List<Product>? AllProducts_MemoryCacheHit() { _memoryCache.TryGetValue("products", out List<Product>? products); return products; }

[Benchmark] public async Task<List<Product>?> AllProducts_RedisCacheHit() { var bytes = await _redisCache.GetAsync("products"); return bytes is not null ? JsonSerializer.Deserialize<List<Product>>(bytes) : null; }}Benchmark Results

| Method | Mean | Allocated |

|---|---|---|

| SingleProduct_DatabaseFetch | ~500 us | ~8 KB |

| SingleProduct_MemoryCacheHit | ~0.02 us | 0 B |

| SingleProduct_RedisCacheHit | ~1,200 us | ~4 KB |

| AllProducts_DatabaseFetch (1000) | ~12,000 us | ~650 KB |

| AllProducts_MemoryCacheHit (1000) | ~0.02 us | 0 B |

| AllProducts_RedisCacheHit (1000) | ~3,500 us | ~420 KB |

These are representative numbers from my local PostgreSQL + Redis setup (both running in Docker). Your results will vary based on network topology, hardware, and serialization format. Run the benchmarks yourself using the companion repo.

Here is what these numbers tell us:

In-memory is unbeatable for raw speed. Cache hits are ~0.02 microseconds with zero allocations because IMemoryCache returns the same object reference. No serialization, no network, no GC pressure.

Redis adds 1-3ms of overhead per call. That comes from the network round-trip plus JSON serialization and deserialization. The ~4 KB allocation on a single-product Redis hit is the serialization buffer. For the 1,000-item list, you see ~420 KB of allocations because the entire JSON payload is deserialized into new objects.

Redis is still 3-10x faster than the database. The single product fetch from PostgreSQL takes ~500us. Redis serves it in ~1,200us, which is technically slower for single items. But the real win shows on the list query: ~12,000us from the database versus ~3,500us from Redis. That is a 3.4x speedup, and it scales linearly as your database query complexity grows.

My take: The single-product result surprises some developers. Yes, Redis is slower than a simple primary key lookup in PostgreSQL for one row. Where Redis pays for itself is on expensive queries: joins, aggregations, filtered lists, and anything that requires compilation and execution of a complex query plan. If your endpoint runs a simple FindAsync(id), in-memory caching gives you the best return. If it runs ToListAsync() on 1,000+ rows or a multi-table join, Redis caching is a significant win even with the serialization overhead.

For more on optimizing the database queries that happen on cache misses, check out my articles on compiled queries in EF Core and tracking vs no-tracking queries.

HybridCache Migration Path

If you are starting a new .NET 10 project, consider HybridCache before reaching for IDistributedCache directly. HybridCache (GA since .NET 9) gives you L1 in-memory caching with an optional L2 distributed backend, built-in stampede protection, and tag-based invalidation. It eliminates most of the boilerplate I wrote in the extension methods above.

Here is a side-by-side comparison:

IDistributedCache with Extensions

// Setup: custom extension methods requiredvar product = await cache.GetOrSetAsync( cacheKey, async () => await context.Products.FindAsync(id), new DistributedCacheEntryOptions() .SetSlidingExpiration(TimeSpan.FromMinutes(30)) .SetAbsoluteExpiration(TimeSpan.FromHours(1)), cancellationToken);HybridCache One-Liner

// Setup: builder.Services.AddHybridCache() + optional .AddStackExchangeRedisCache()var product = await hybridCache.GetOrCreateAsync( cacheKey, async ct => await context.Products.FindAsync(id, ct), new HybridCacheEntryOptions { LocalCacheExpiration = TimeSpan.FromMinutes(5), Expiration = TimeSpan.FromHours(1) }, cancellationToken: cancellationToken);The key differences:

- No custom extensions needed.

HybridCache.GetOrCreateAsynchandles the cache-aside pattern natively with type-safe generics. - Stampede protection built-in. If 100 requests hit the same cache miss, only one executes the factory. The other 99 wait for the result. With

IDistributedCache, all 100 would query the database. - L1 + L2 automatically. HybridCache stores data in process memory (L1) and optionally in Redis (L2). The L1 hit is nanosecond-scale, and the L2 is the fallback when L1 expires or the pod restarts.

- Tag-based invalidation. Call

hybridCache.RemoveByTagAsync("products")to invalidate all entries tagged with “products”. No need to track individual keys.

My take: For existing projects already using IDistributedCache with custom extensions, there is no urgency to migrate. The extension methods work fine. But for new projects, HybridCache is the better starting point because it solves stampede protection and L1+L2 layering out of the box. I plan to write a dedicated HybridCache deep dive soon.

Common Pitfalls and Troubleshooting

After running Redis caching in production across multiple projects, here are the pitfalls I see most often:

Redis Connection Timeout

Problem: Your API throws RedisConnectionException or RedisTimeoutException intermittently, causing 500 errors.

Cause: The default connection timeout is 5 seconds, and abortConnect defaults to true. If Redis is briefly unreachable during a deployment or network blip, the connection fails permanently.

Solution: Set abortConnect=false in your connection string so StackExchange.Redis retries in the background. Increase connectTimeout and syncTimeout for high-latency environments. Wrap cache calls in try-catch and fall back to the database:

try{ return await cache.GetOrSetAsync(cacheKey, factory, options, cancellationToken);}catch (RedisConnectionException ex){ logger.LogWarning(ex, "Redis unavailable. Falling back to database for key: {CacheKey}.", cacheKey); return await factory();}Serialization Failures

Problem: JsonException when reading cached data after deploying a new version that changed the model.

Cause: You added or renamed a property on your Product class, but Redis still has the old serialized format. Deserialization fails because the JSON shape no longer matches the C# type.

Solution: Use a versioned cache key pattern (e.g., "v2:products") when making breaking model changes. Alternatively, configure JsonSerializerOptions with PropertyNameCaseInsensitive = true and DefaultIgnoreCondition = JsonIgnoreCondition.WhenWritingNull to be more lenient.

Large Objects Consuming Redis Memory

Problem: Redis memory usage spikes to several GB, and eviction starts dropping important keys.

Cause: Caching entire database tables or large object graphs without size awareness. A 1,000-item product list serialized to JSON can be 500KB+ per cache entry.

Solution: Cache only what you need. Use DTOs instead of full entity graphs. Set maxmemory and maxmemory-policy in your Redis configuration. I use allkeys-lru as the eviction policy so Redis automatically removes the least-recently-used keys when memory is full.

Stale Data After Writes

Problem: Users create a new product but the product list still shows the old data.

Cause: The write operation did not invalidate the cached product list. This is the #1 caching bug I see in production.

Solution: Call cache.RemoveAsync(cacheKey) in every write method that affects cached data. Make it a pattern: never save to the database without considering which cache entries to invalidate. For complex invalidation scenarios, consider HybridCache’s tag-based invalidation.

Redis Single-Threaded Bottleneck

Problem: Redis CPU hits 100% and response times degrade across all application instances.

Cause: Redis is single-threaded for command processing. If you are running expensive operations (large KEYS * scans, huge sorted set operations, or Lua scripts) alongside caching, they block each other.

Solution: Never use KEYS * in production. Use SCAN instead. Keep cached values small. If you need both caching and heavy data operations, run separate Redis instances. For read-heavy workloads, add read replicas.

Docker Data Loss on Container Restart

Problem: All cached data disappears when the Redis container restarts.

Cause: Redis runs in-memory by default. Without persistence configured, a container restart means a cold cache.

Solution: Enable AOF persistence with --appendonly yes in your Docker command or compose file. Mount a volume for /data so the AOF file persists on the host. For production, use both RDB snapshots and AOF for durability.

Global Exception Handling in ASP.NET Core

When Redis or the database layer fails, you need consistent error responses. Learn how to set up global exception handling in your API.

Key Takeaways

- Distributed caching with Redis solves the multi-instance consistency problem that in-memory caching cannot. All pods share the same cache, eliminating inconsistent data behind load balancers.

- Redis adds 1-3ms of latency per cache call compared to nanosecond in-memory hits, but it is still 3-10x faster than database queries for list operations and complex queries.

- Always set

abortConnect=falsein your Redis connection string. This prevents permanent connection failures during temporary network issues and lets StackExchange.Redis retry in the background. - Build extension methods for

IDistributedCacheto addGetOrSetAsyncandTryGetValue<T>. The raw interface only works withbyte[]arrays, which is painful without helper methods. - Invalidate cache entries on every write operation. Unlike

IMemoryCache, you cannot mutate cached references directly because Redis stores serialized bytes, not object references. - For new .NET 10 projects, consider HybridCache first. It provides L1 in-memory + L2 Redis, built-in stampede protection, and tag-based invalidation without custom extensions.

- Redis is not always faster than the database for single-row lookups. The benchmark showed ~1,200us for a Redis hit vs ~500us for a primary key database fetch. Redis pays for itself on expensive queries, list operations, and multi-table joins where database time exceeds the serialization overhead.

Summary

Distributed caching with Redis is the natural next step when your ASP.NET Core application grows beyond a single instance. The IDistributedCache interface with custom extension methods gives you a clean, type-safe API for cache operations, and the BenchmarkDotNet numbers confirm that Redis is 3-10x faster than database queries for list operations despite the serialization overhead.

The complete source code, including the BenchmarkDotNet project and Docker compose file, is available in the companion repository.

If you found this helpful, share it with your colleagues. Happy Coding :)

In-Memory Caching in ASP.NET Core .NET 10

If you have not read the prerequisite article yet, start here. It covers IMemoryCache, GetOrCreateAsync, cache invalidation strategies, and BenchmarkDotNet results.

Response Caching with MediatR Pipeline Behavior

Automate caching at the request handler level using MediatR's pipeline behaviors. Every query response gets cached with zero changes to your handlers.

What is distributed caching in ASP.NET Core?

Distributed caching in ASP.NET Core stores cached data in an external server (like Redis) that is shared across all application instances. Unlike in-memory caching which is scoped to a single process, distributed caching ensures consistent data regardless of which pod serves the request. It uses the IDistributedCache interface and supports providers like Redis, SQL Server, and NCache.

How do I set up Redis caching in .NET 10?

Install the Microsoft.Extensions.Caching.StackExchangeRedis NuGet package (version 10.0.0), then call builder.Services.AddStackExchangeRedisCache() in Program.cs with your Redis connection string. The connection string format is 'localhost:6379,password=yourpassword,abortConnect=false'. You also need a running Redis instance, which you can start with Docker using 'docker run --name redis -d -p 6379:6379 redis'.

What is the difference between IMemoryCache and IDistributedCache?

IMemoryCache stores data in the application's process memory with zero network overhead and zero serialization - cache hits are nanosecond-scale. IDistributedCache stores data externally (in Redis, SQL Server, etc.) with network round-trips and serialization overhead, adding 1-5ms per call. The key trade-off is that IMemoryCache is scoped to a single instance, while IDistributedCache is shared across all instances and survives restarts.

How much latency does Redis add compared to in-memory caching?

Based on BenchmarkDotNet results, a Redis cache hit takes approximately 1,200 microseconds for a single item compared to 0.02 microseconds for an IMemoryCache hit. That is roughly 60,000x slower in isolation. However, Redis is still 3-10x faster than database queries for list operations (3,500us vs 12,000us for 1,000 items). The overhead comes from network round-trips and JSON serialization/deserialization.

Should I use Redis or HybridCache in .NET 10?

For new .NET 10 projects, HybridCache is the recommended starting point. It combines L1 in-memory caching with an optional L2 Redis backend, includes built-in stampede protection, and offers tag-based invalidation via RemoveByTagAsync. For existing projects already using IDistributedCache with Redis, there is no urgency to migrate - your current setup works fine. Use Redis directly when you need its advanced features like pub/sub, sorted sets, or Lua scripting.

How do I run Redis locally with Docker?

Run 'docker pull redis' to download the image, then 'docker run --name redis -d -p 6379:6379 redis redis-server --requirepass yourpassword' to start a container with password authentication. For a more maintainable setup, use docker-compose with a YAML file that includes volume mounting for data persistence and the --appendonly yes flag for AOF persistence. Access the Redis CLI with 'docker exec -it redis sh' followed by 'redis-cli -a yourpassword'.

How do I handle Redis connection failures in production?

Set abortConnect=false in your Redis connection string so StackExchange.Redis retries in the background instead of failing permanently. Wrap cache calls in try-catch blocks and fall back to the database when Redis is unavailable. Increase connectTimeout and syncTimeout for high-latency environments. Use Redis Sentinel for automatic failover in production. Never let a Redis outage take down your API - cache failures should degrade performance, not availability.

What serialization format should I use with Redis caching?

JSON via System.Text.Json is the most common choice for IDistributedCache because it is human-readable (easy to debug in Redis Insight), built into .NET with no extra packages, and fast enough for most workloads. If you need smaller payloads and faster serialization, consider MessagePack or protobuf-net. Configure JsonSerializerOptions with WriteIndented = false to minimize payload size and DefaultIgnoreCondition = JsonIgnoreCondition.WhenWritingNull to skip null properties.

What's your take?

Push back, share a war story, or ask the obvious question someone else is wondering. I read every comment.